24 years ago, Ben Folds’ Rockin’ the Suburbs entered my bloodstream – and I still remember the bus ride to Montreal with the CD in my Discman. It wasn’t just the music though. Part of what mesmerized me is that the album was practically a one-man show. Ben Folds wrote, […]

Technology

There are about 30 working desktops and laptops in my house, ranging from 1993 to present, but I think the coolest computer I have is my parents’ old IBM ThinkPad 240. One of the smallest ThinkPads ever made back in 1999, this is a vintage ThinkPad. It’s still operational today […]

Over the past few weeks, I’ve been expanding my two-screen setups into three-screen setups — a laptop plus two external monitors. Because I thought I only had one display port (HDMI), I went searching for USB display adapters. (I just realized today that my ThinkPad T470 has a USB-C / […]

Printers have always been terrible. I got away without buying a new printer since 2008 or 2010… until today. I bought an HP printer, because I’ve used HP printers for a long time, and mainly because of the existence of hplip. My goal, after all, is to have no nonsense […]

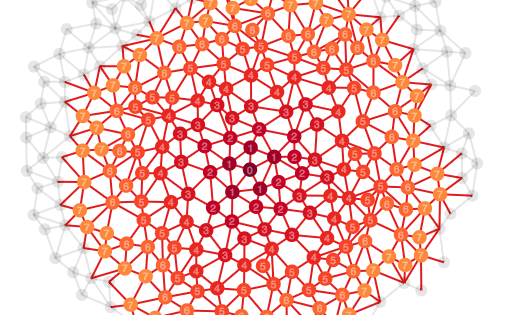

Understanding exponential growth is a prerequisite to understanding the pandemic. I’ve had many conversations about the public health response to COVID-19 over the past year. Setting aside more extreme conspiracy thinking, I’m increasingly convinced that most of the non-conspiracy skepticism of public health measures comes down to misunderstanding exponentials. People […]

In Part 1 in this series, I explained why there are no easy answers to social media censorship. The problem is not a “Big Tech” conspiracy to silence particular voices. The problem is that too much of our public discourse is mediated by private platforms — centralized, proprietary, walled gardens. […]

There are no easy answers to speech, censorship and internet freedoms given the current state of the internet. Some solutions are better than others, but there’s no perfect answer. If someone suggests there’s a simple answer, they’re probably wrong. As Harvard internet law professor Jonathan Zittrain explained in June: The […]

When I get frustrated about people’s response to public health measures, I rant on social media. When I rant on social media, some of the conversations are painful, but… others are just fantastic. Perhaps I thrive on genuine dialogue, which forces you to try to understand another person, and to […]

Since the start of the pandemic, many people have questioned the lockdowns, the emergency measures and overall government response. Especially after the curve was flattened in Canada, even more people question whether the measures are still necessary, or whether the goalposts are shifting. This defies common sense, the critique goes. […]